Content-Based Recommendations & Information cocoon

I believe that most people noticed that websites and apps can display content based on the history that they browsed. It happens on a batch of platforms such as TikTok, Twitter, YouTube and so on… When you order two or three times burgers on a food delivery app, it will often recommend burgers for you. It sounds pretty good to let you see more content which you’re interested in according to your preferences, but it also brings a critical problem – Information cocoon.

What’s the Information cocoon?

Information cocoon is as known as Echo Chamber, which refers to a situation where individuals are surrounded by, and primarily exposed to, information and ideas that align with their existing beliefs and perspectives. It can lead to limited exposure to diverse viewpoints and may reinforce pre-existing biases or opinions. Actually, this situation always happens in our human society, but AI systems possibly inadvertently contribute to or solidify these information bubbles by selectively presenting or amplifying content that aligns with a user’s existing views, rather than providing a balanced and diverse range of information.

For example, I often search for political topics on a Q&A website, while my friend usually looks up travel topics on it. Many days later, I only can view political content on its home page, while my friend’s only includes items about trips. It seems fine, we both follow our favourite topics, but after a long time, I will find there is only politics in my world, and I have less and less chance to learn about other people’s topics such as travel. The content of my account locks me in an information bubble by my initial selection of topics. I’m cut off from other topics on this site. Nevertheless, it’s not the worst situation. Let’s see a real example.

Display of Different Comments based on gender

In a Chinese social media platform, someone posted a video about the gender topic. Gender opposition is a big topic in China so this video was very hot. However, users found that the comments on the video were ordered as different weights in terms of gender when people talked about it. If the user is a male, the first few comments content is inclined to man, and vice versa. Thus, different gender users weren’t able to see other’s opinions.

Recommendation systems aim to please users’ preferences without truly facilitating exchanges of opposing viewpoints between different sides. This behaviour objectively exacerbates the opposition between genders, as everyone is simply fervently expressing their own viewpoints. Even many people, when they see content aligned with their preferences, further reinforce their preexisting beliefs.

Similar things happen not only among genders but also countries, races, ideologies, religions…Many years ago, I thought that the Internet brought diversity to all humankind and let people from different backgrounds learn about each other well, but I’ve also been seeing it potentially enhances hatred, bias and antagonism, especially after the smarter recommendation system was born. Everyone is restricted by a bubble which provides the information that they only want to see.

Passively Obtain Information

When I search for some keywords on Google, Wikipedia etc, I would say I am actively obtaining information. In the age of AI, we have started to use ChatGPT to actively obtain information. Well, how has AI changed the way of passively obtaining information?

In my childhood, I often listened to music on the radio. I never knew the DJ would play which songs every day. I was just aware of some songs I like and some I dislike. There were different genres, the songs composed in different decades. Though some of them were what I didn’t enjoy, I found a lot of fresh styles I never touched before.

However, on some current music platforms, AI can deeply learn my behaviour so that it judges what I would like. I increasingly feel that the songs recommended by the system are severely homogeneous. It lets me feel there’s only one genre in the world, very bored, but when I look at other people’s playlists, a whole new world opens up.

I believe that most information is passively acquired rather than actively sought. Recommendation algorithms make passive information acquisition easy but dull.

Is AI the Sin for It?

Firstly, I don’t believe AI is the root cause of all this. Hatred, bias, and opposition have existed in humanity since early times, and each specific culture has been an echo chamber in the past. It’s just that AI has learned all of this and, with its immense computational power, has presented it to humanity. However, AI does indeed objectively reinforce many of our inherent biases and perceptions, making it difficult to objectively understand the world when passively acquiring knowledge. It’s not an exaggeration to say that we are being brainwashed by AI every day.

When I realize that our thoughts are being subtly changed, even possibly controlled, by AI, I can’t help but have concerns about AI. This also involves ethical and moral issues. When I asked ChatGPT whether AI solidifies information bubbles, it replied with:

AI itself does not solidify information bubbles, but it can be influenced by data and algorithms, leading to the formation of information bubbles. If AI only encounters specific types of data or undergoes biased algorithm training, it may generate biases or limit the scope of information. Therefore, ensuring that AI systems have access to diverse and fair data, as well as well-designed algorithms, is crucial to avoid the issue of information bubbles.

Therefore, I believe that software service providers are the ones truly obligated to improve this situation. They have the capability to set limits and rules for AI, adjust algorithms, provide diversity in content, and mitigate some of the societal conflicts.

I’m glad to see that the EU has already established laws addressing the ethical and moral issues brought by AI. There are still many ethical concerns posed by AI, such as the ability to mimic human faces and voices, potentially spreading false information. Besides, there are numerous challenges that I think we should be concerned about, prompting the need for more reasonable regulations to mitigate these issues. More importantly, it’s crucial to raise awareness among more people about these potential risks.

]]> Nowadays GPT 4.0 has brought the topic of AI unprecedented attention. While many people enjoy this new technology, some express their worries.

Perhaps you heard these from the Internet and your friends:

Nowadays GPT 4.0 has brought the topic of AI unprecedented attention. While many people enjoy this new technology, some express their worries.

Perhaps you heard these from the Internet and your friends:

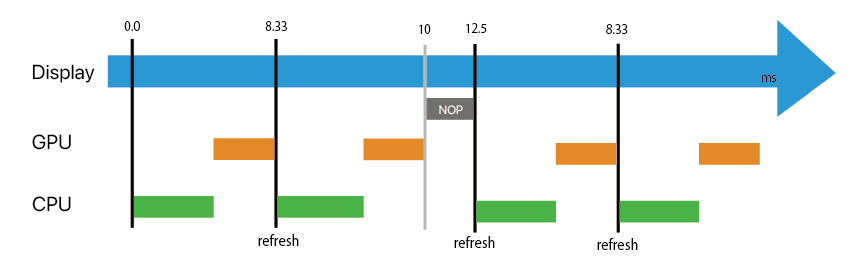

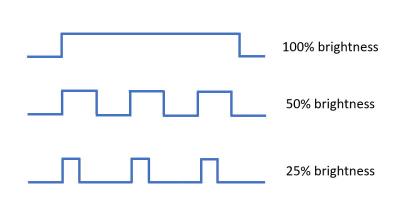

If the brightness is set as 50% in a certain span of time, just keep 50% time is on and 50% time is off in same frequency. This is an easy(or cost-effective) way to achieve aim. Nevertheless, it has serious drawbacks, such as the flicker that may cause eye strain and headaches. Just this isn’t today’s topic.

If the brightness is set as 50% in a certain span of time, just keep 50% time is on and 50% time is off in same frequency. This is an easy(or cost-effective) way to achieve aim. Nevertheless, it has serious drawbacks, such as the flicker that may cause eye strain and headaches. Just this isn’t today’s topic.